plant identification station

for “the sensitive botanist”

PROJECT DETAILS

LOCATION: PITTSBURGH, PA

WHEN: FALL 2023

TYPE OF WORK: ACADEMIC

TEAMMATES: EUGINA CHUN, LIA PURNAMASARI, AUDREY REILEY

PROFESSORS: DINA EL-ZANFALY, ANDREW TWIGG

COURSE: INTERACTION DESIGN STUDIO I

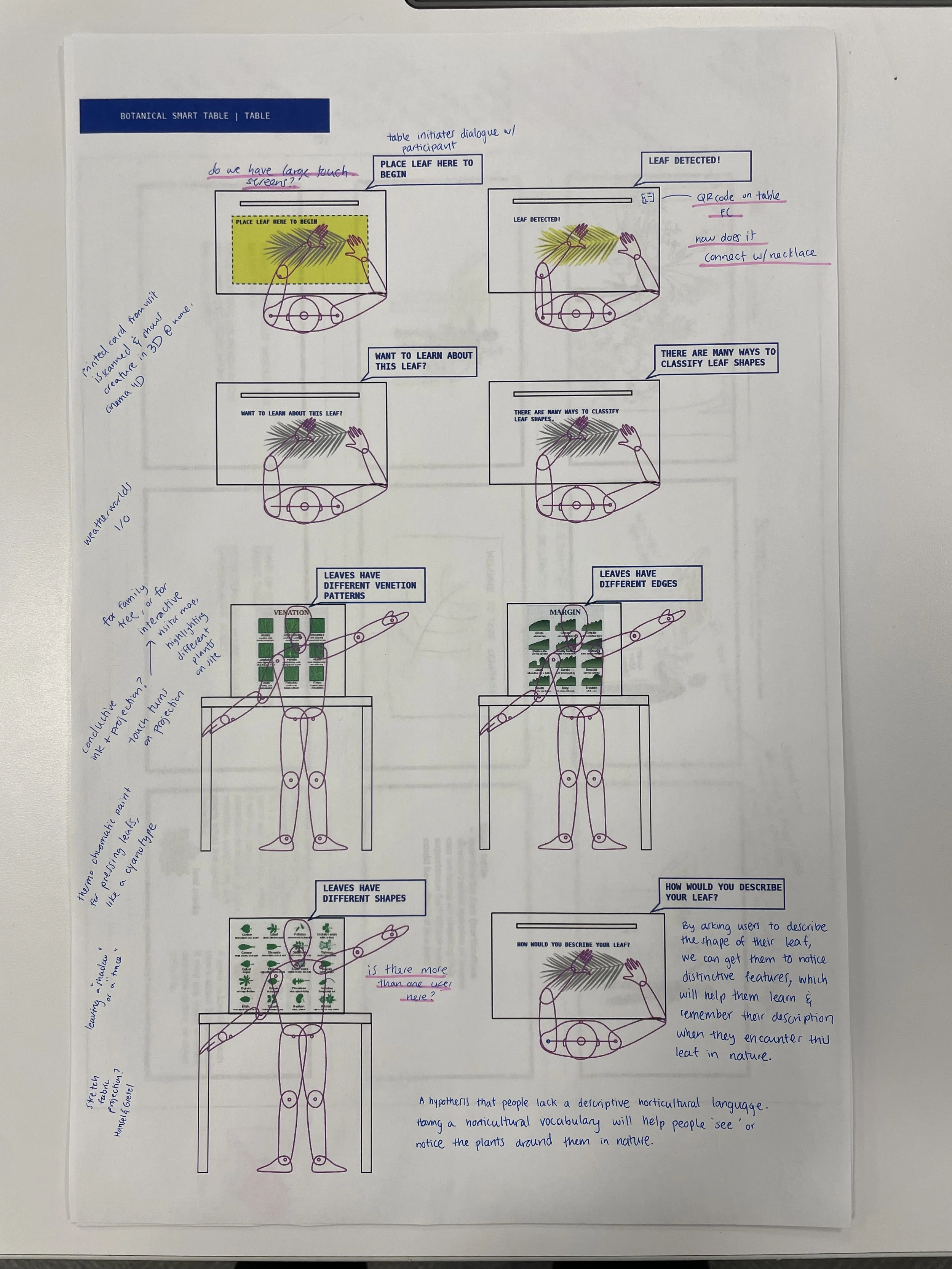

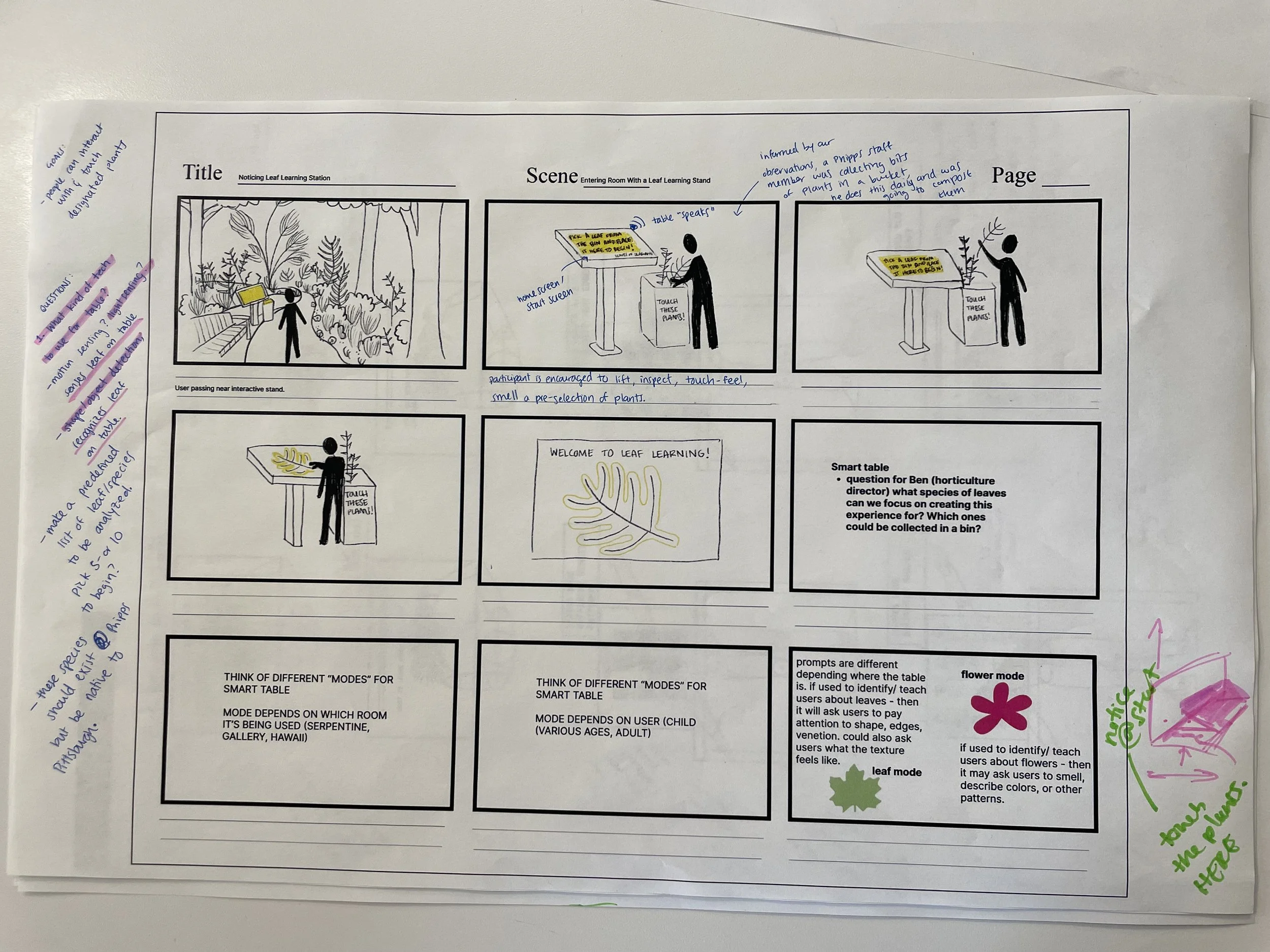

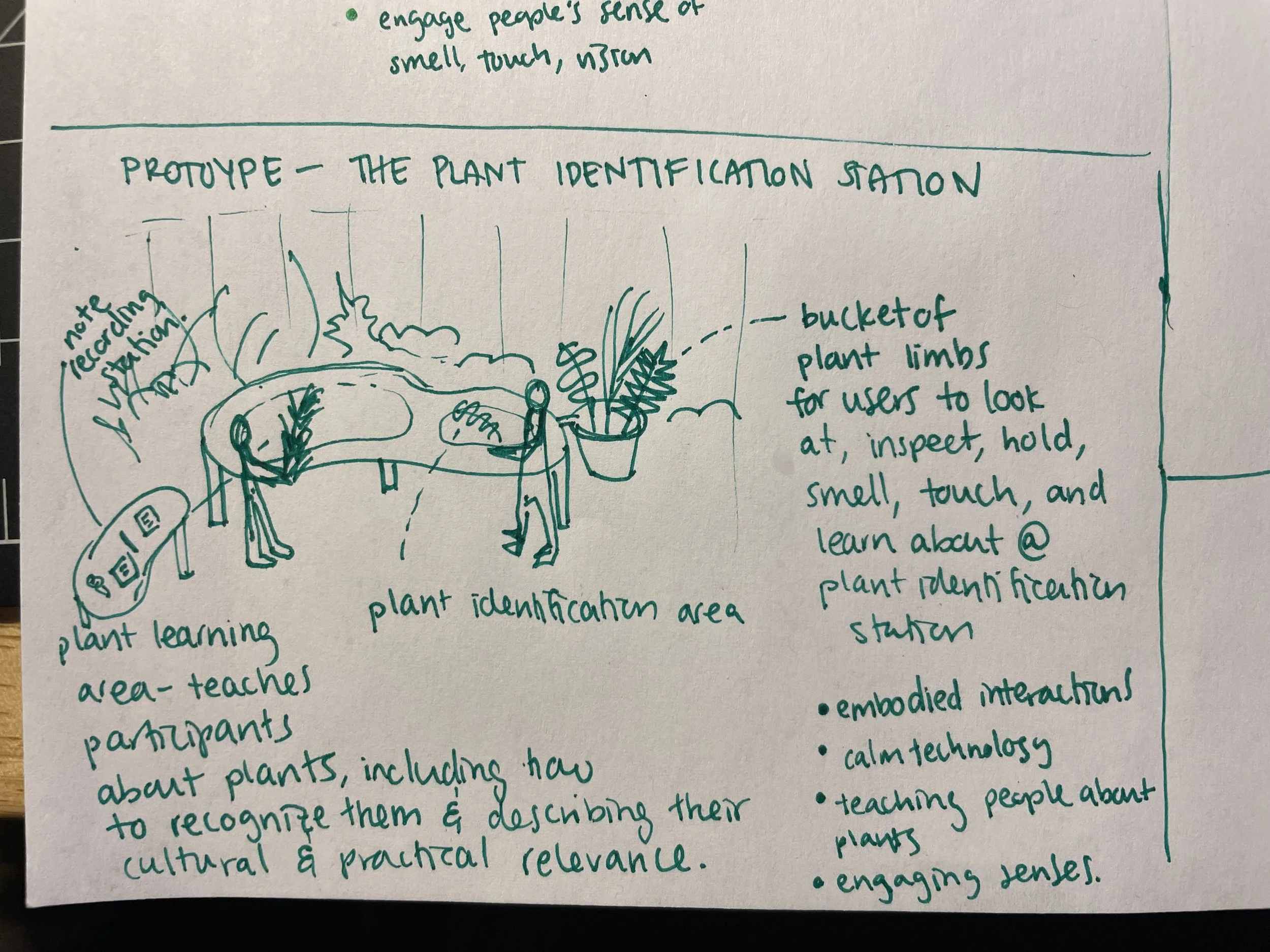

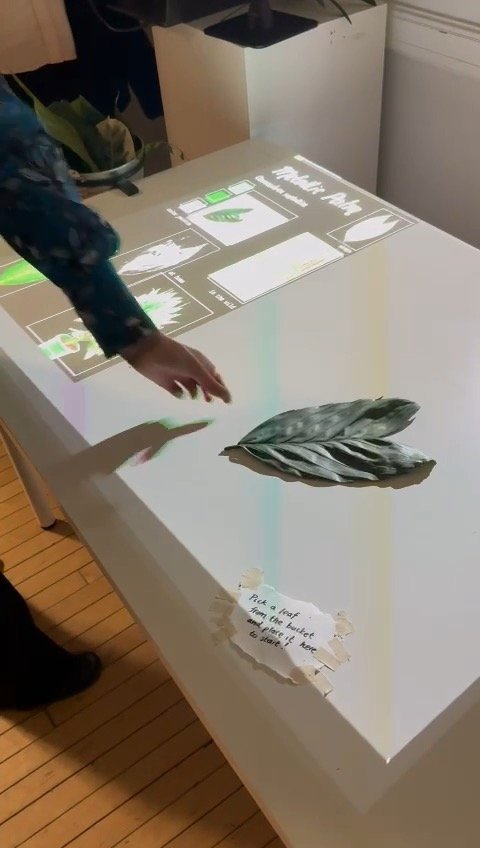

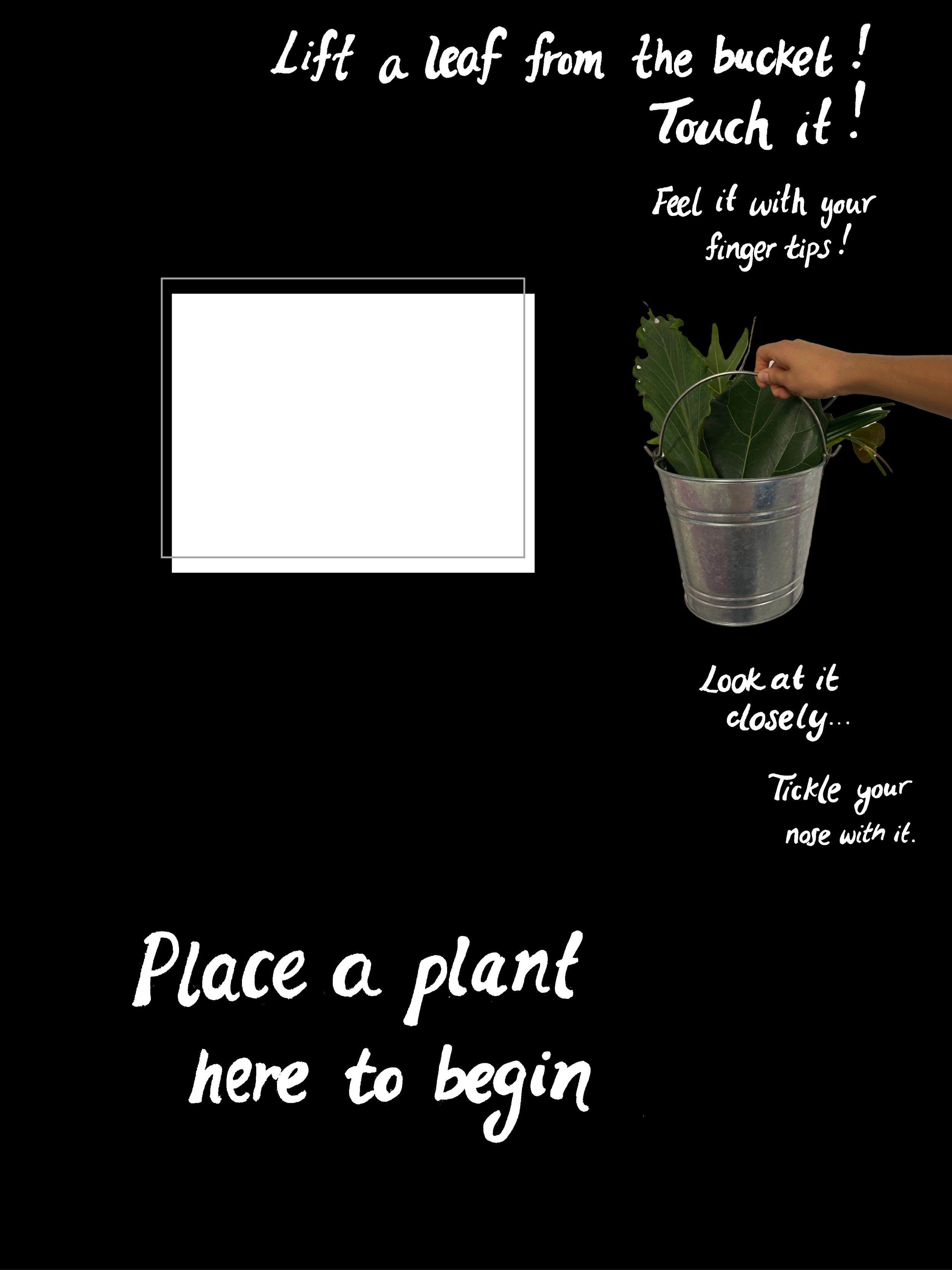

An important part of our Plant Identification Station is the ability to interact with real plants. We heard from several visitors at Phipps botanical garden that they wanted to touch plants and to engage with them more directly. So, in our design, we offer visitors exactly what they asked for and allow them to inspect, touch, or sniff real loose leafs and plant limbs.

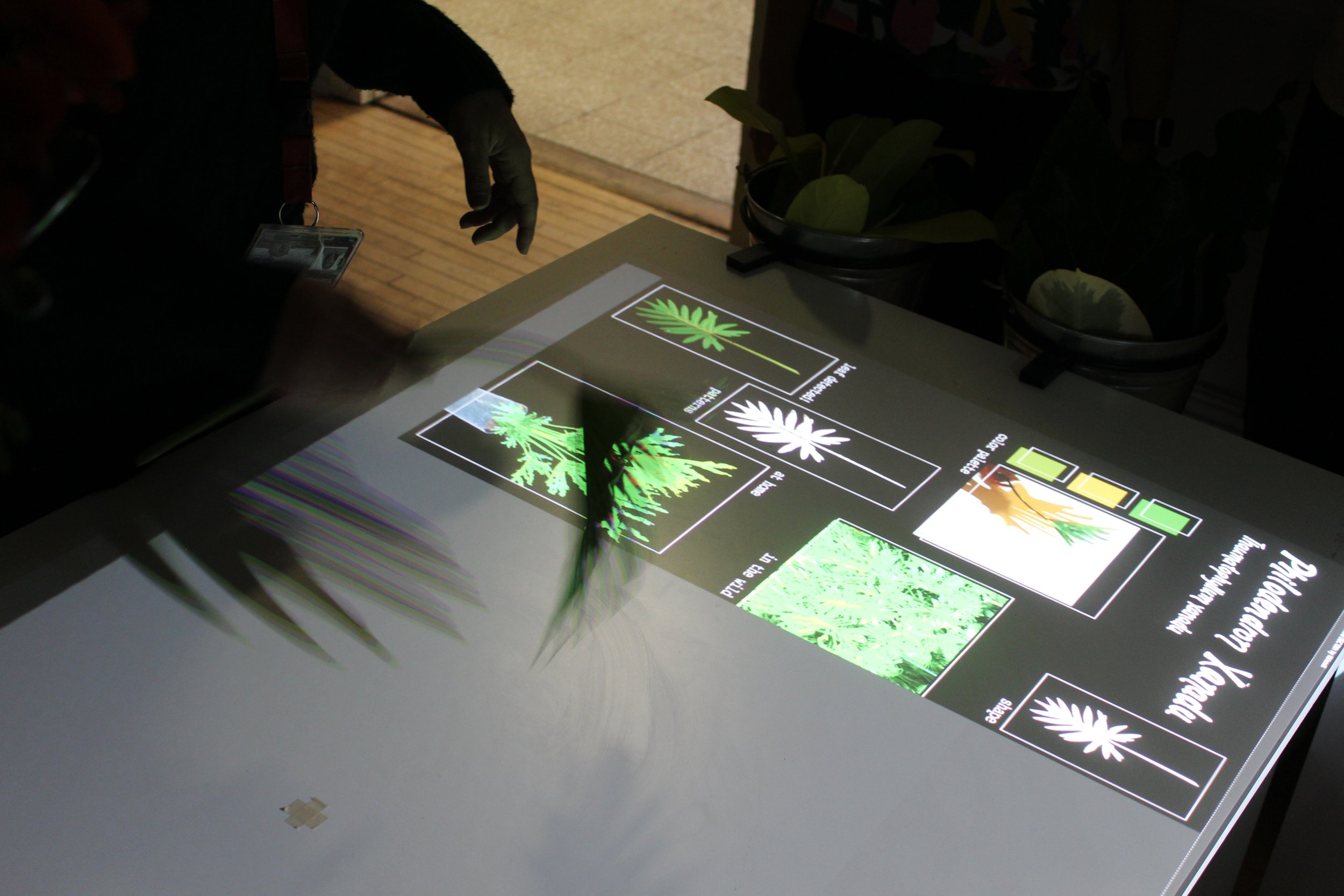

The interactive table not only recognizes real plants placed on top of it, but seeks to get people to do so by offering them new plant vocabularies that get them to pay attention to them in ways they haven’t done before. For example, it asks them to describe venation patterns and edge shapes with precise, descriptive language. Finally, this interactive experience seeks to establish meaningful connections between plants and people by explaining the plant’s cultural significance. For example, their presence in common foods, traditions, and rituals, or the history behind their names.

In the photos below you see people touching and interacting with plants. We heard people say they wanted to touch plants - so there they go! Our interaction is all about getting people to touch plants.

DEMO DAY REACTIONS

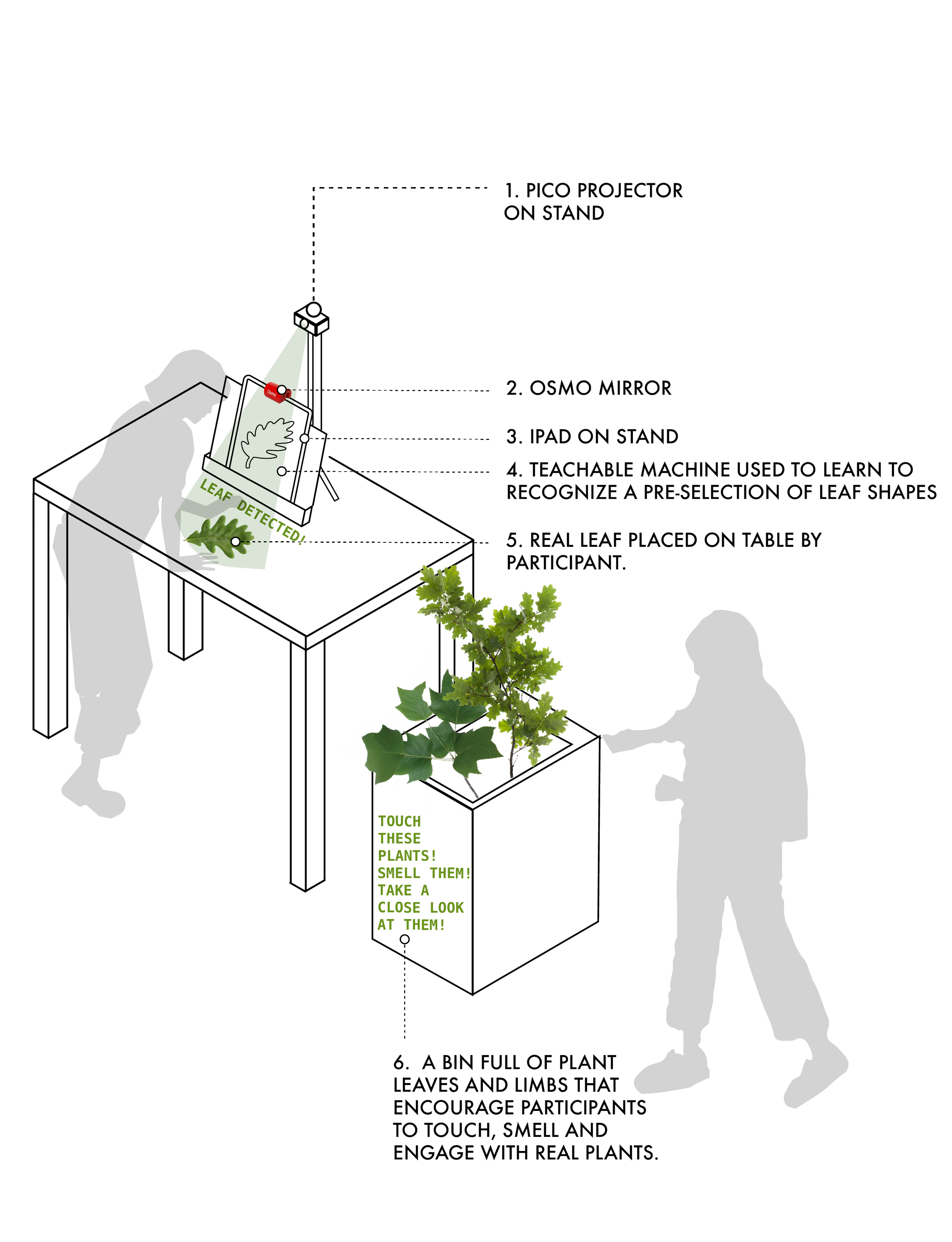

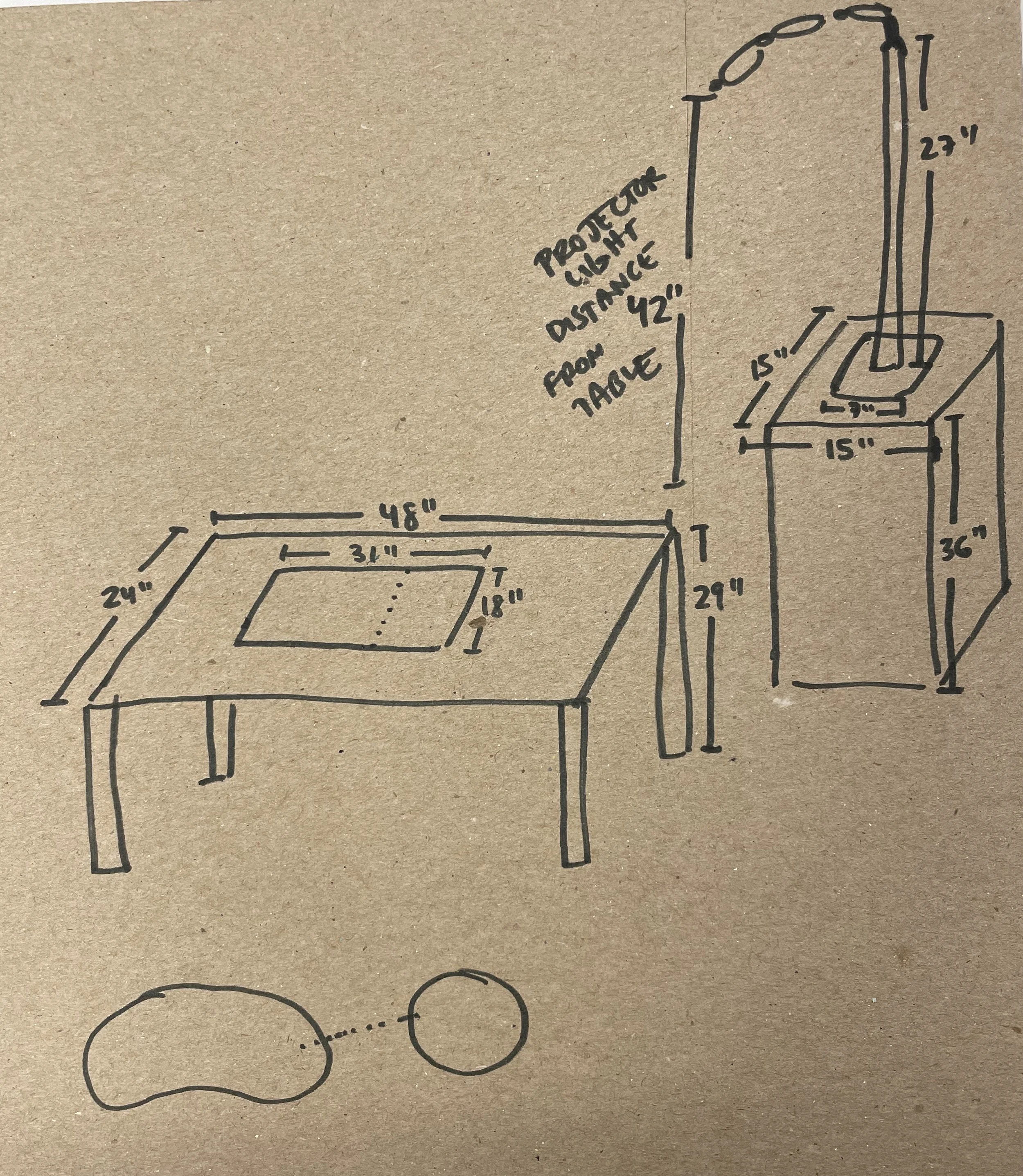

SYSTEM SET-UP

TECHNOLOGIES LEVERAGED

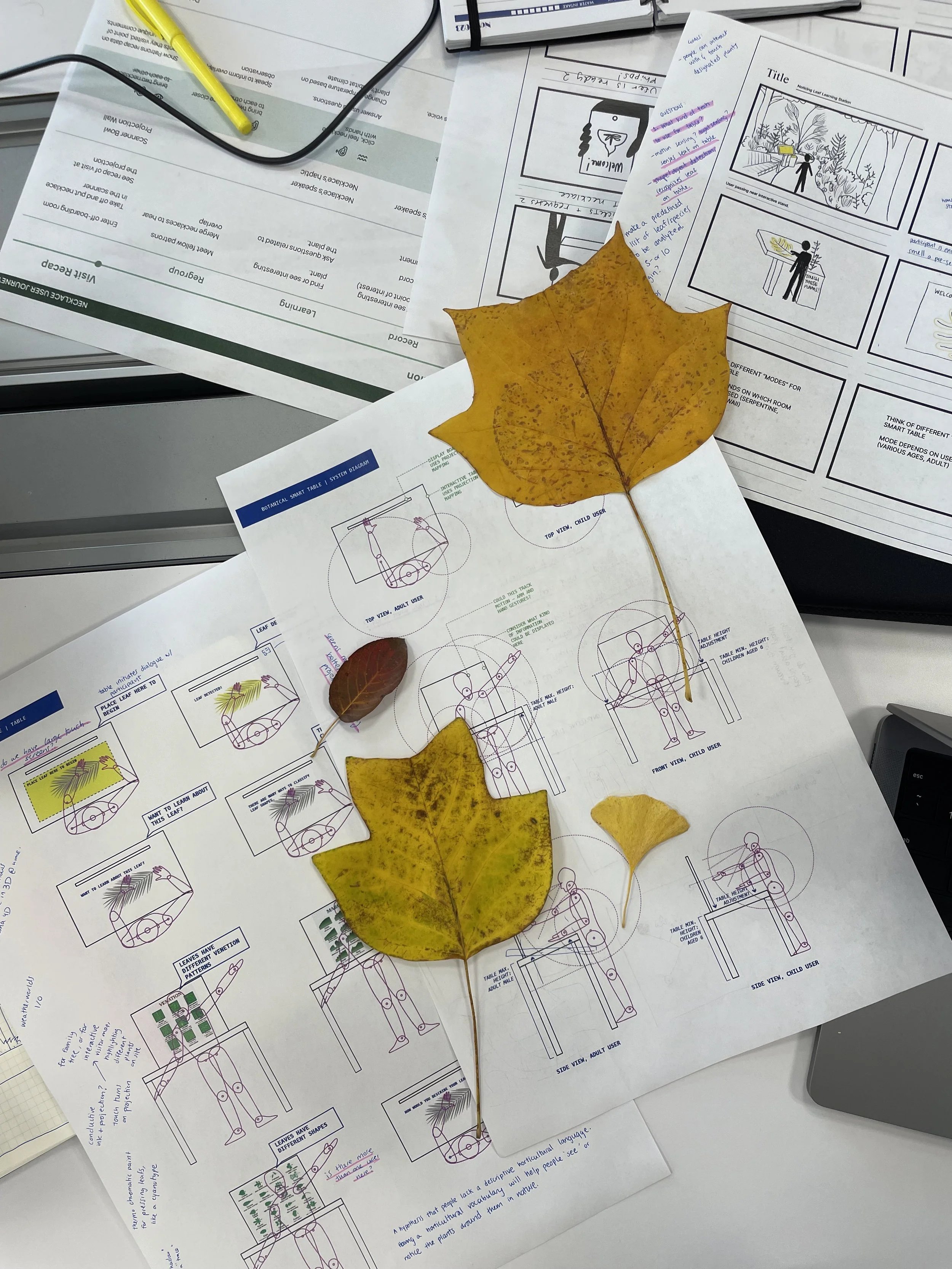

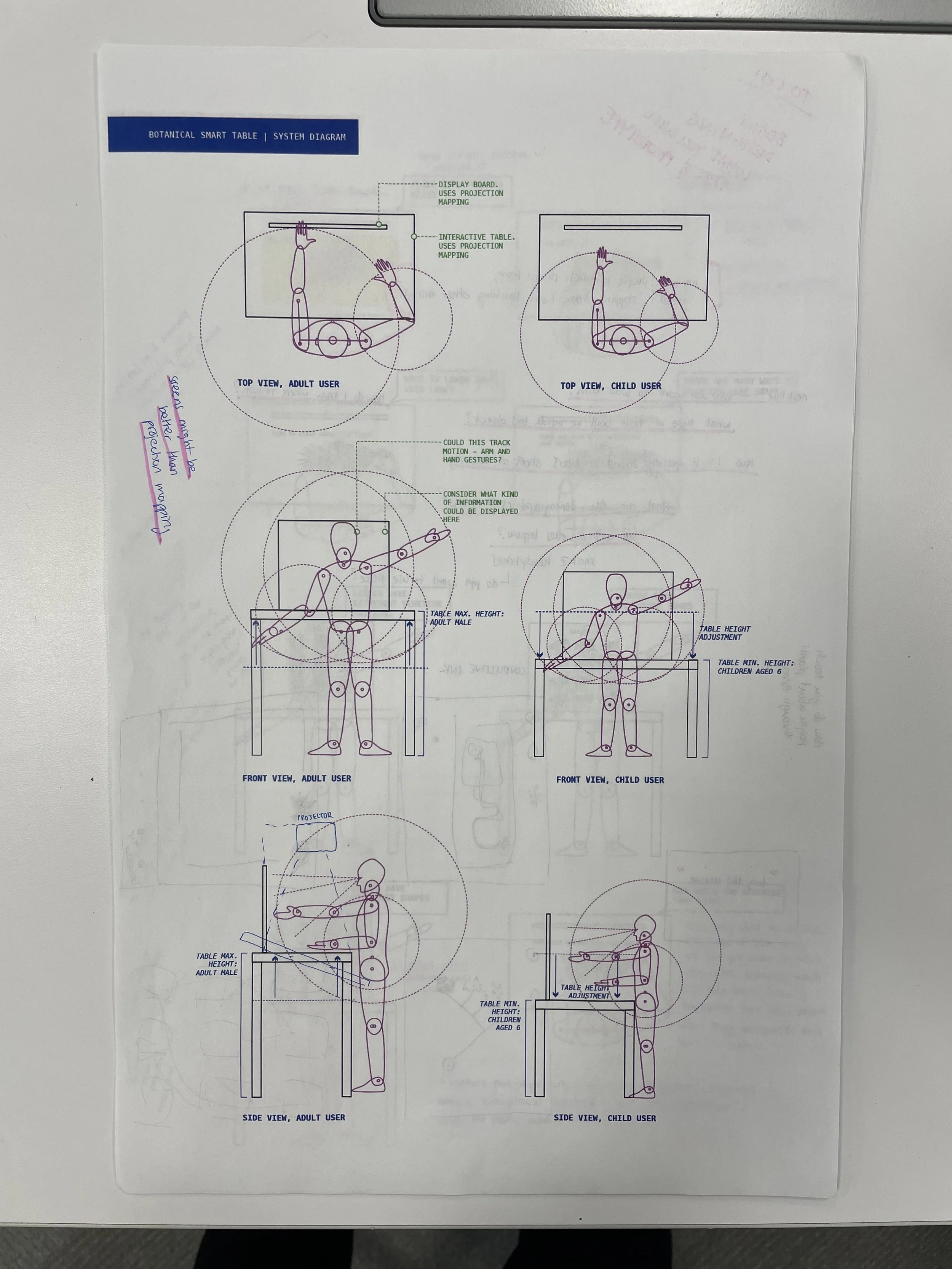

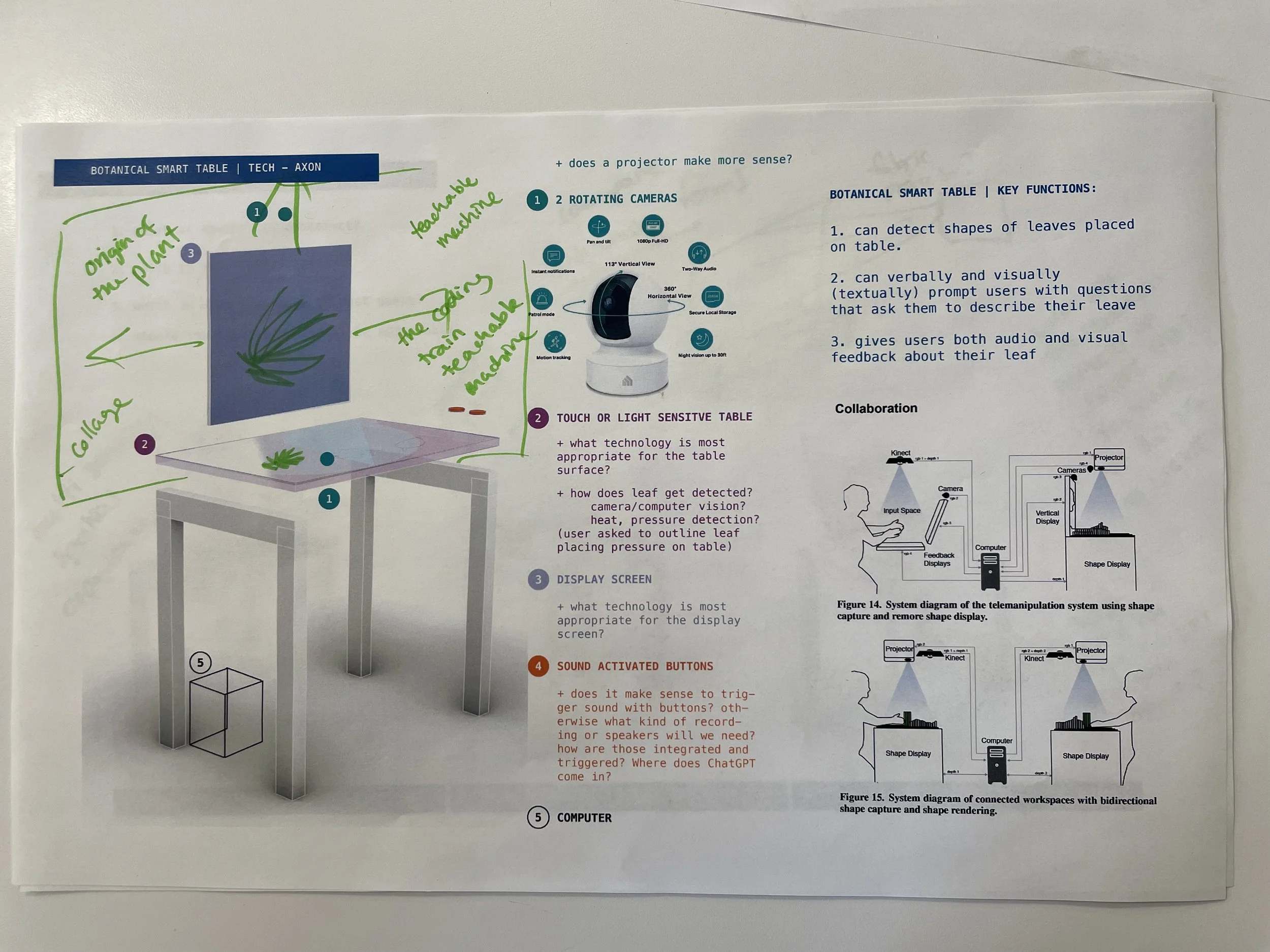

PROTOTYPING | INITIAL SYSTEM DIAGRAM

Our plant ID station went through several iterations. This is our initial prototype, where we were using an iPad to recognize the leaves and were displaying a very simple image the indicated leaf recognition on the iPad screen.

TECHNOLOGIES LEVERAGED:

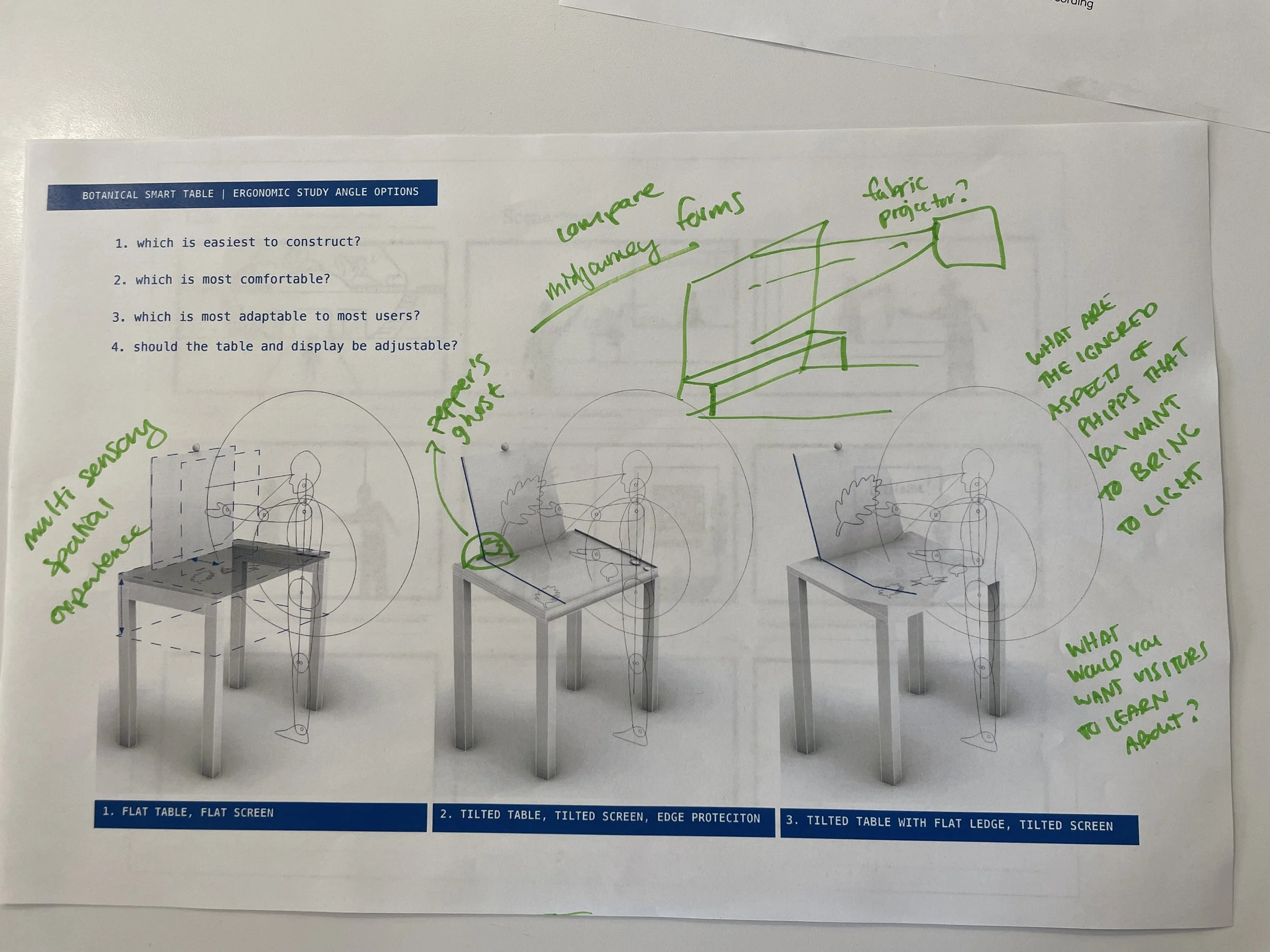

PROTOTYPING | EVOLVING SYSTEM DIAGRAMs

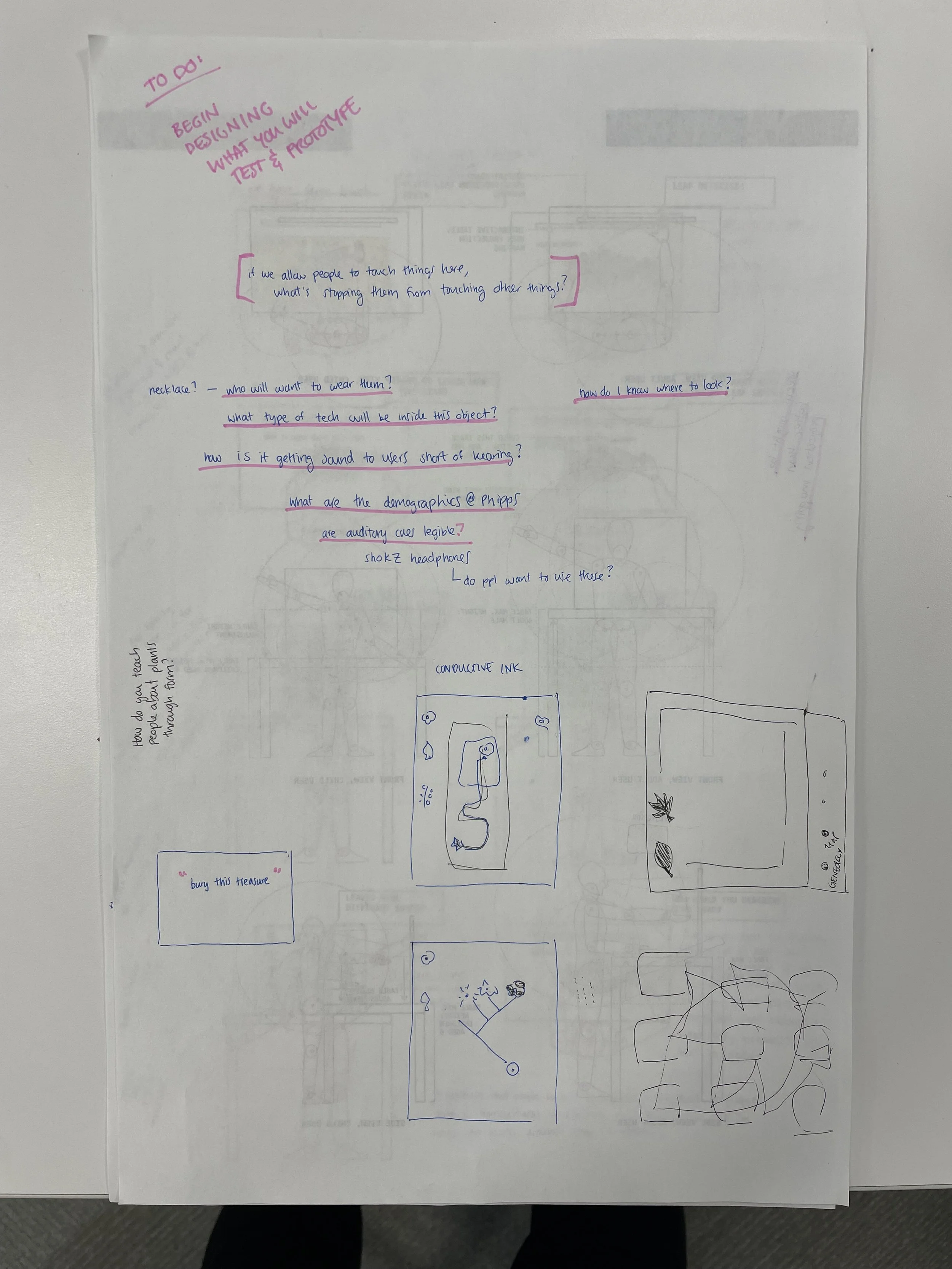

UI PROTOTYPING

We realized our interaction would feel more magical and be more of an immersive experience if it could be projected onto the table the participants were at instead of the small iPad screen, and if the display could be more informative - so we made updates the visual display that would be projected upon the leaf recognition for our demonstration prototype. We also used a potted orchid as a stand for our webcam to have it hidden. If we had more time, we could have created stronger wow-factor interactions. Like what if the leaf recognition triggered an animation or an activated a holographic 3D display?

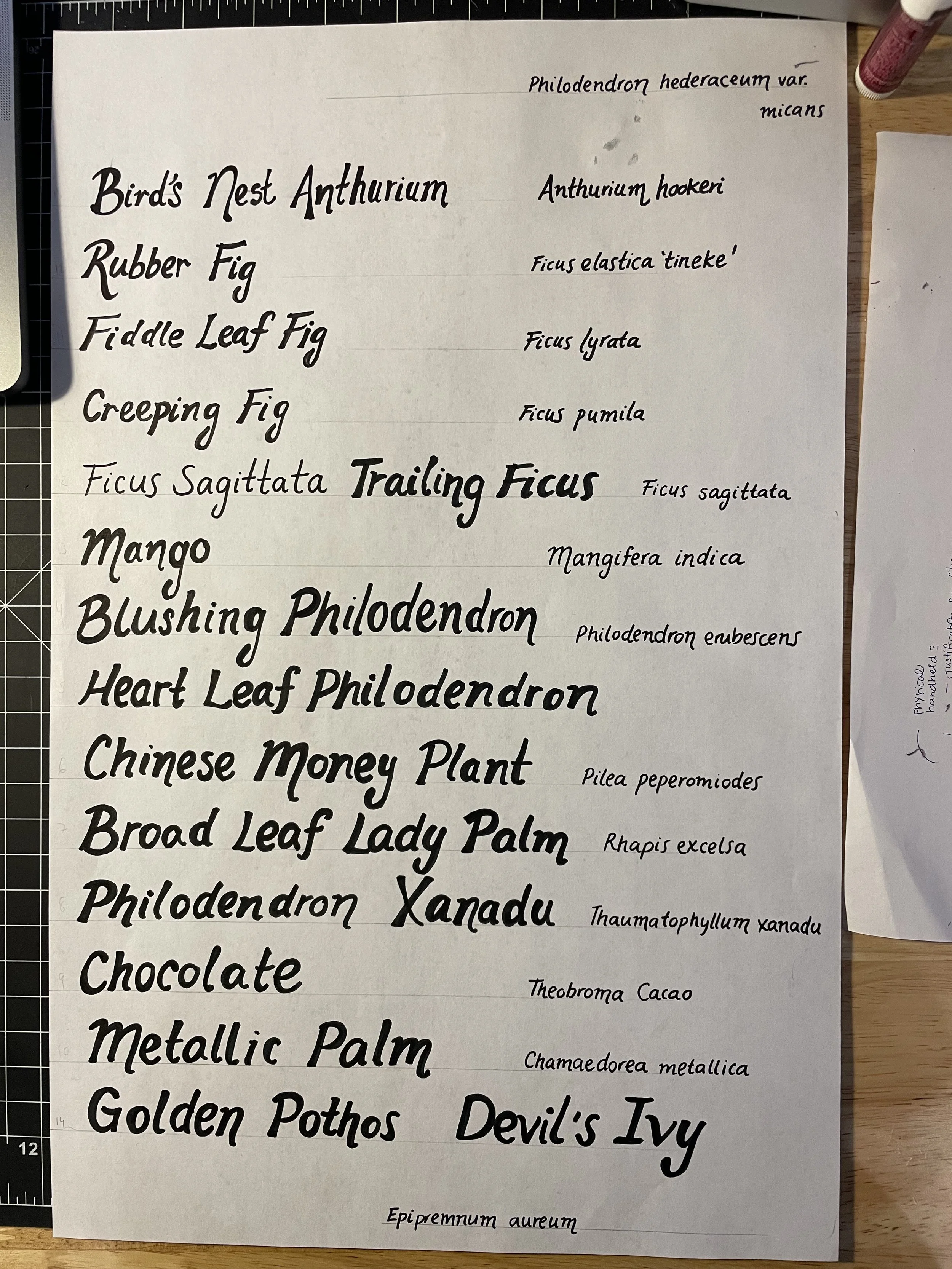

Finding what specific plants to use for these interactions was difficult. Early on, we had the idea of offering loose leafs and plant limbs for visitor inspection and table identification - but what species were suitable for that? The species selection was also challenging because winter was coming and the tree leafs I was hoping to train our model on would be gone by mid-December when the final project was due.

Fortunately, we found a solution during one of our site visits. Because we were paying close attention to what other people were doing in the space, Eugina noticed that a Phipps’ staff member was carrying around a bucket of plant limbs. We approached her and asked her what she was collecting those for. When she told us she was just doing a daily clean up and that the collected plant material would be composted, we asked if we could have them. This initiated a series of leaf pickups that I used to train a model using Google’s Teachable Machine. After each leaf pickup I would take hundreds of photos of the different leafs and tag them with their names so the model could learn to recognize them.

A note on the species selected

Our leaf recognition model was trained on various photos of the fourteen species shown here. We had multiple samples of each species and photographed each leaf from different angles. It was a relief that the model could recognize not just the leaf we trained it on, but other leaves of the same species. It was also great that these leafs were consistently available from Phipps since they are from species that are constanly shedding leaves.